Our Developer Relationship Associate, Cheuk Ting Ho, recently put together this six-part tutorial to demonstrate how to get started with TerminusDB and TerminusCMS using the Python client.

The six videos detail everything you need to know about working with TerminusDB using the Python client including setting up a blank database, importing data from CSVs, updating and linking data, preparing data for analysis, and lots more.

For the full tutorial, visit our documentation site: Getting Started with Python Tutorial.

Lesson 1: Using the Python client to start a project and create a database with schema

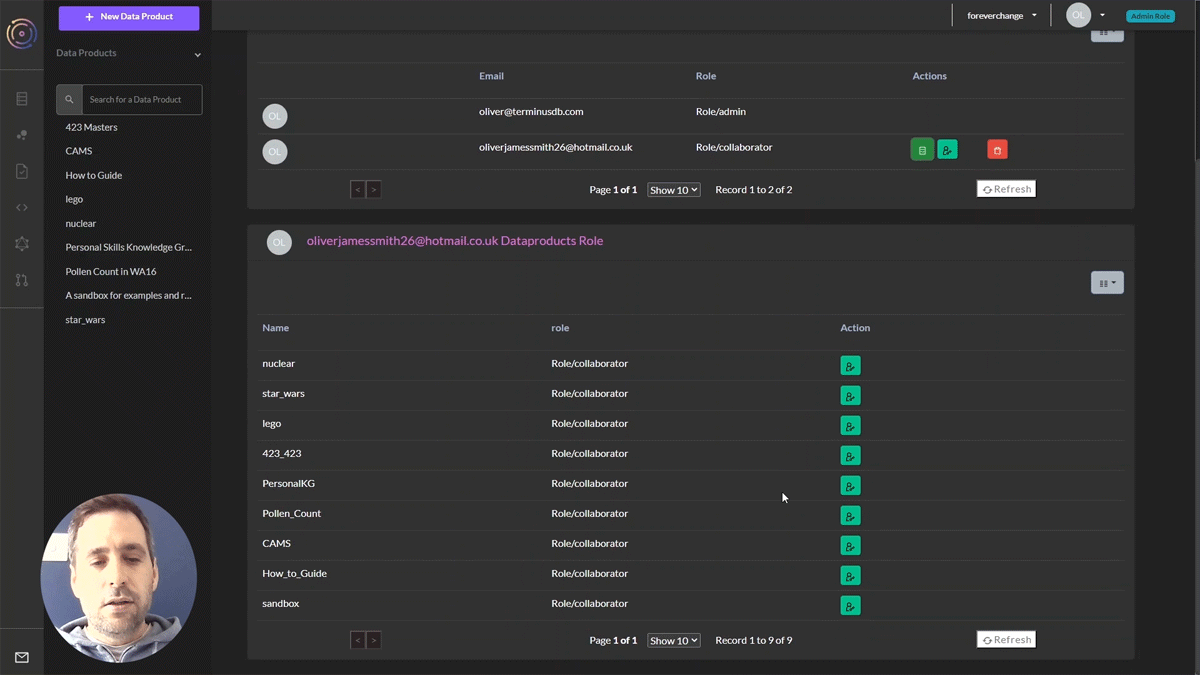

Cheuk skips over installing TerminusDB as the instructions to install as a Docker container can be found in our documentation. But for your ease and convenience, TerminusCMS enables you to get started straight away, sign up if you haven’t already.

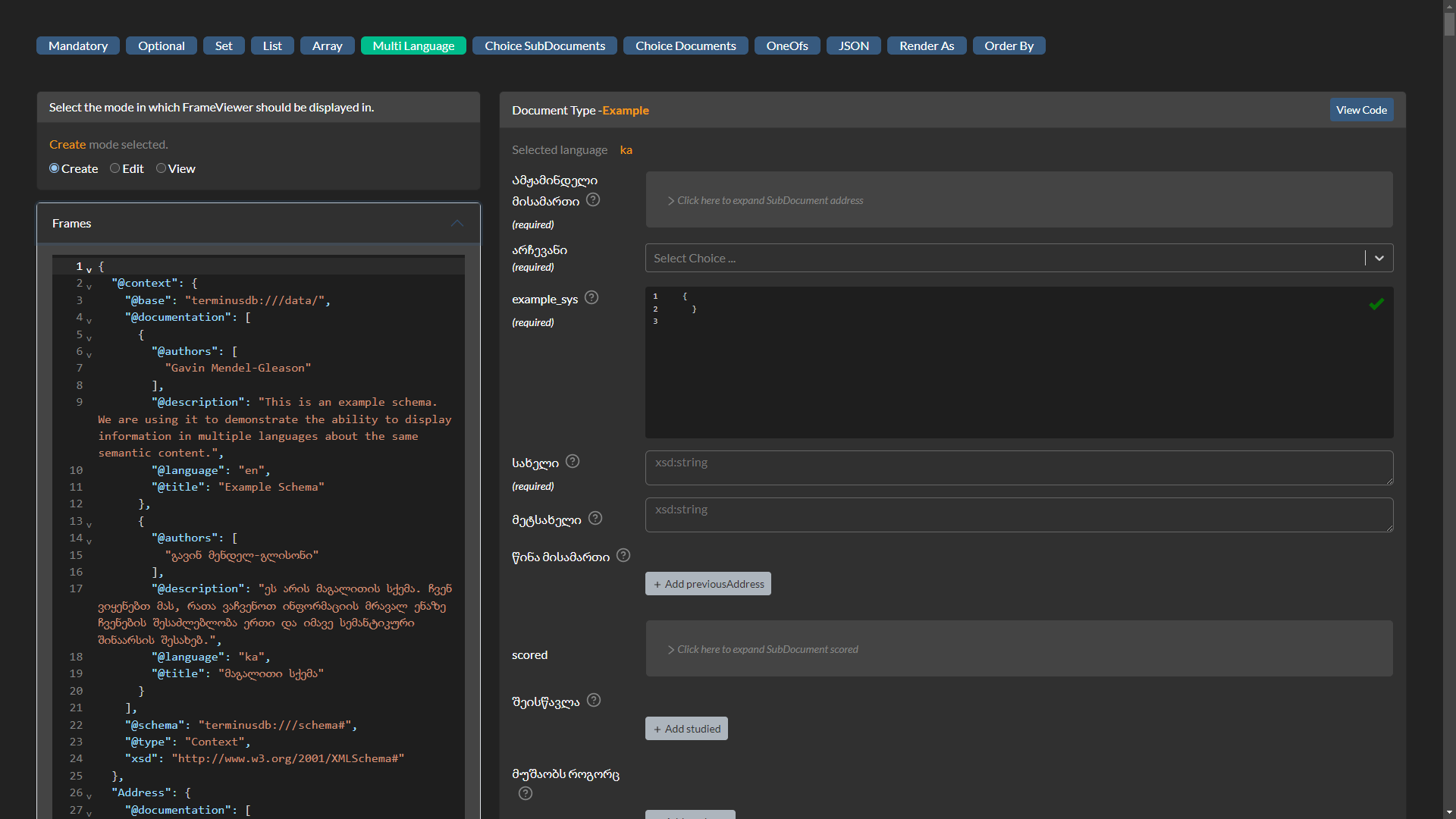

This lesson shows you how to start a project, how to create a schema in Python, and then how to commit the schema into TerminusDB/X to create the new database.

Tutorial: Lesson 1 – Using the Python client to start a project and create a database with schema

Lesson 2: Importing CSV files into your database

So you created your database with schema in lesson 1, the next job is to import some data into it. In lesson 2, Cheuk shows you how to use the TerminusDB importcsv command. It also demonstrates how to link data together in your import, the example here is a list of employees, some of whom are managers of others in the dataset.

Lesson 3: Importing data using a Python script

When data is more complex, importing data from CSV might not be the answer. Here you would use a Python script to import objects, that would become documents in TerminusDB/X; enums, and other variables into your database. As well as covering how to create and run a Python script, lesson 3 also includes some handy tips like using the schema.py to import classes and objects from the schema into the script.

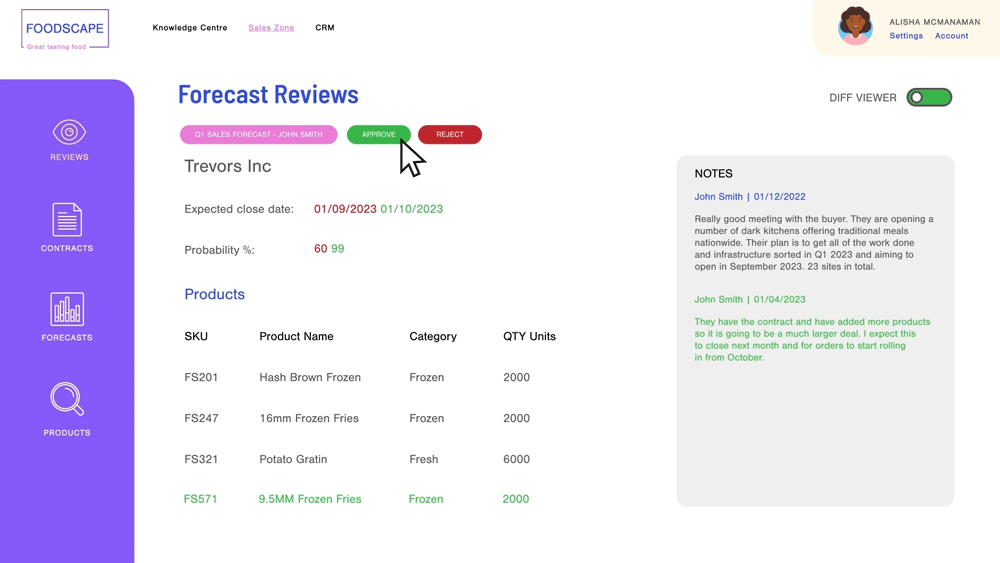

Lesson 4: Updating the database with new and amended data

Lesson 4 uses a Python script to update the database you created with new records and to update existing information in your database. Cheuk talks you through getting the schema and documents from the database to update or replace with new information.

Tutorial: Lesson 4 – Updating the database with new and amended data

Lesson 5: Query the database and get results back in CSV or DataFrames

Cheuk shows you how to query and export the entire database, or specific documents to CSV. She also goes into more detail about how to export to Pandas DataFrames should you need to do a more in-depth analysis of your data.

Tutorial: Lesson 5 – Query the database and get results back in CSV or DataFrames

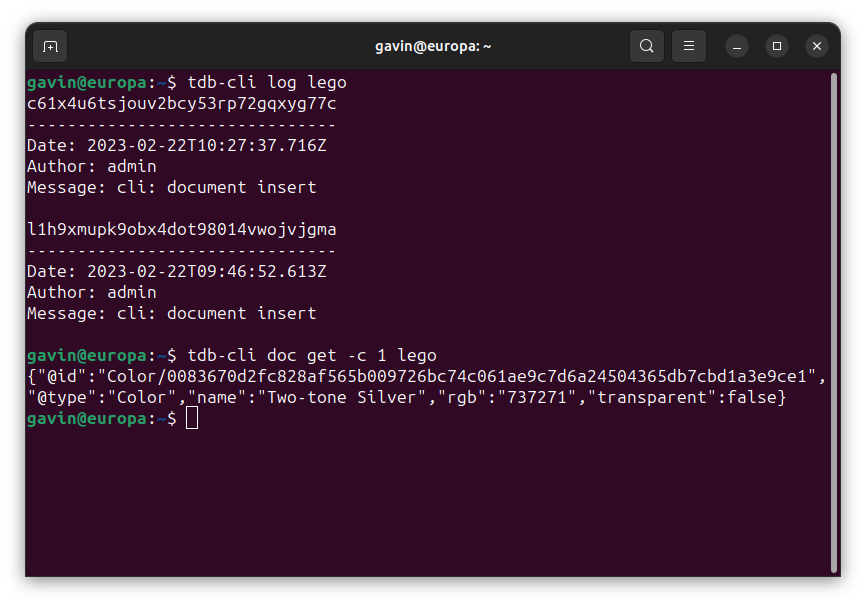

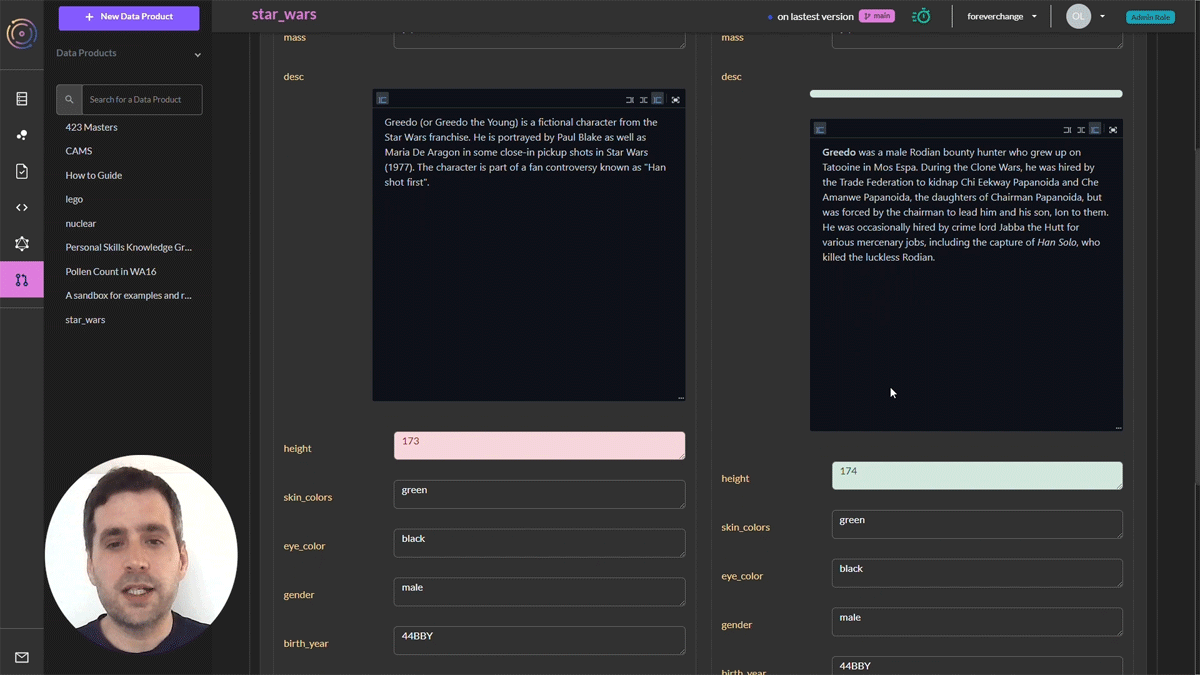

Lesson 6: Version control – Branching, time travel, and rebase

Lesson 6 is all about version control and how branching can aid collaborative working and allow you to time travel across different versions of your data and reset to previous versions, all from the Python client.

Tutorial: Lesson 6 – Version control, branching, time travel, and rebase